In November 2015, I joined Atlassian’s product growth team.

During two years, I helped the team define its very own process and launched multiple experiments on the AARRR funnels of the company's flagship products. Overall, we increased Atlassian’s user base by hundreds of thousands of new active users.

This experience helped me learn new design methodologies which refined my overall comprehension of product design for the digital medium.

Product designer, full time

Nov 2015 – Sept 2017

Increase active users

At the intersection between marketing and product, Atlassian’s product growth team operates as a crucial pillar to achieve the company’s mission to "unleash the potential in every team".

The department optimises the AARRR funnel of JIRA, Confluence and Bitbucket, with multivariate experimentation.

It also provides and sustains an experimentation platform, growth tools and feeds the company with insights derived from experiments and customer research.

As one of the first designer in the team, I have collaborated with engineers and product analysts to build a functioning growth process and improve the growth tooling.

To do so, my manager and I introduced user centered methodologies that helped increase Atlassian’s user base by hundreds of thousands new active users.

Out of the dozens experiments I designed and launched on Bitbucket, JIRA and Confluence, I have selected interesting examples to illustrate the workflow I have learned and practiced at Atlassian.

Due to the company’s confidentiality, the actual object of my work can’t be disclosed and some of the following designs, numbers, text, have been modified, blurred or omitted on purpose.

The principal difference between a product designer and a growth designer is the environment they work in. Even though the design processes can be similar, the product designer creates value from nothing, while the growth designer works on top of the product, connecting user (new or existing) to this value.

Therefore, it is capital for the growth team to understand the current state of the product before thinking of any solutions.

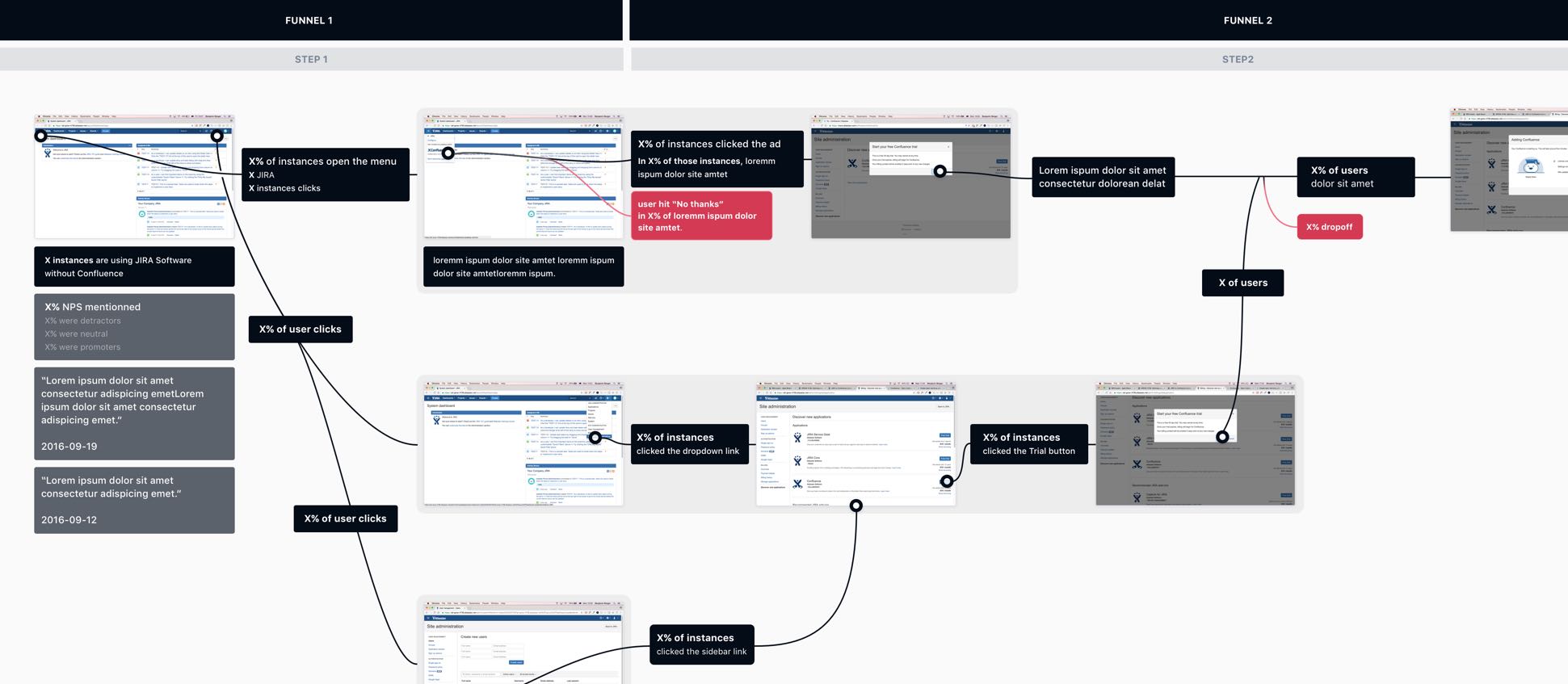

Part of my work was to help the team visualise and define the funnels we would operate in.

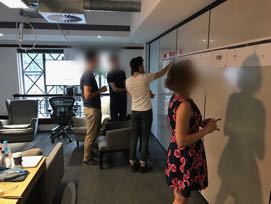

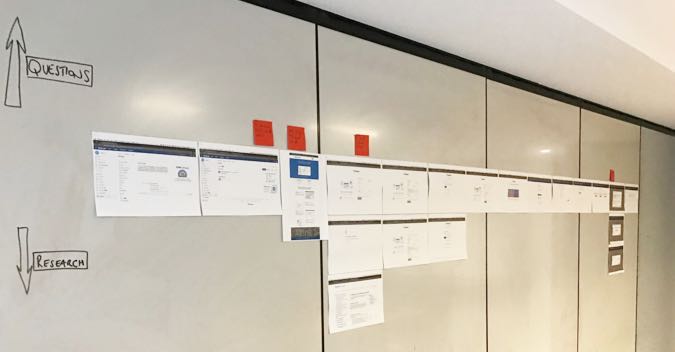

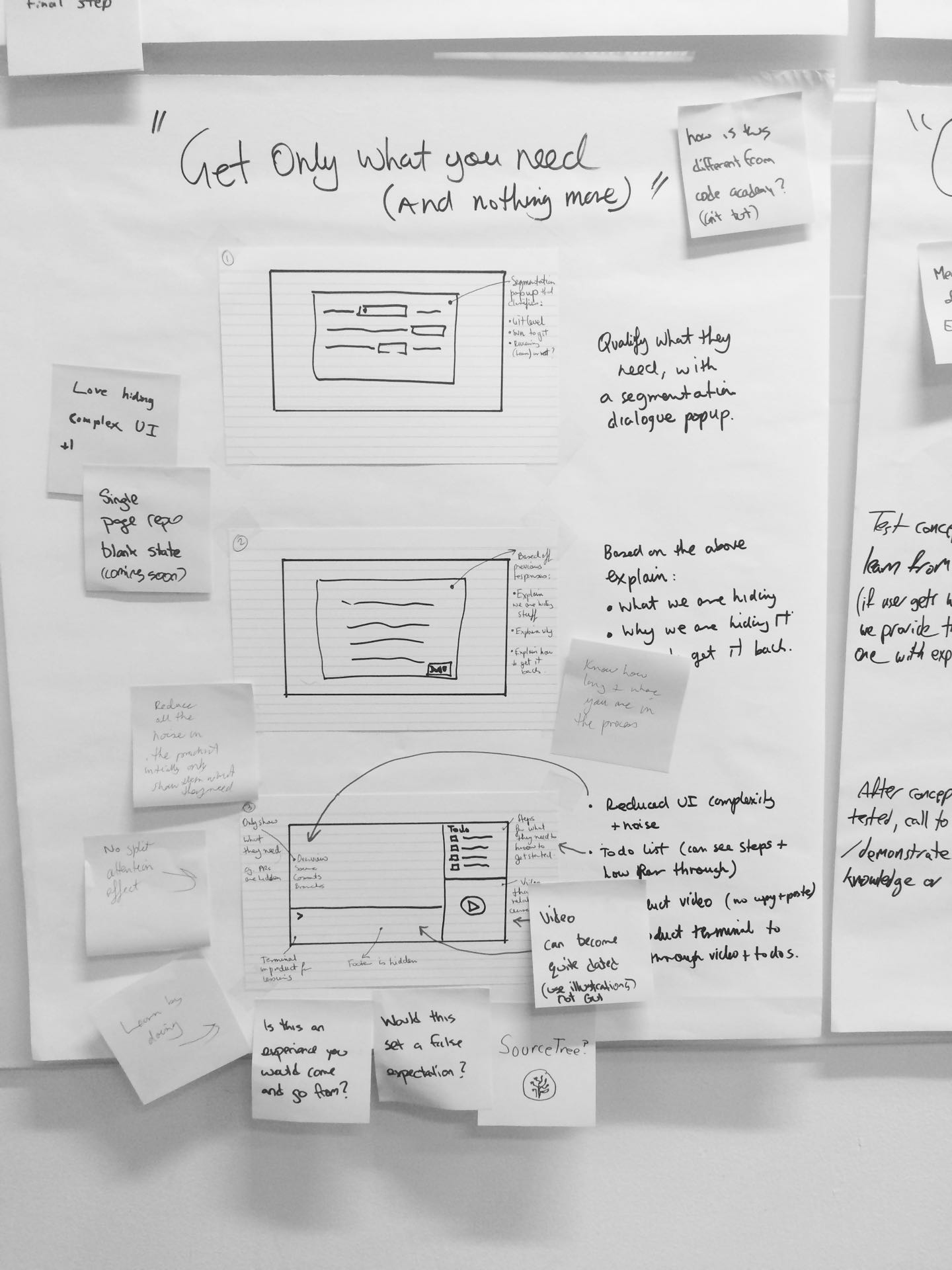

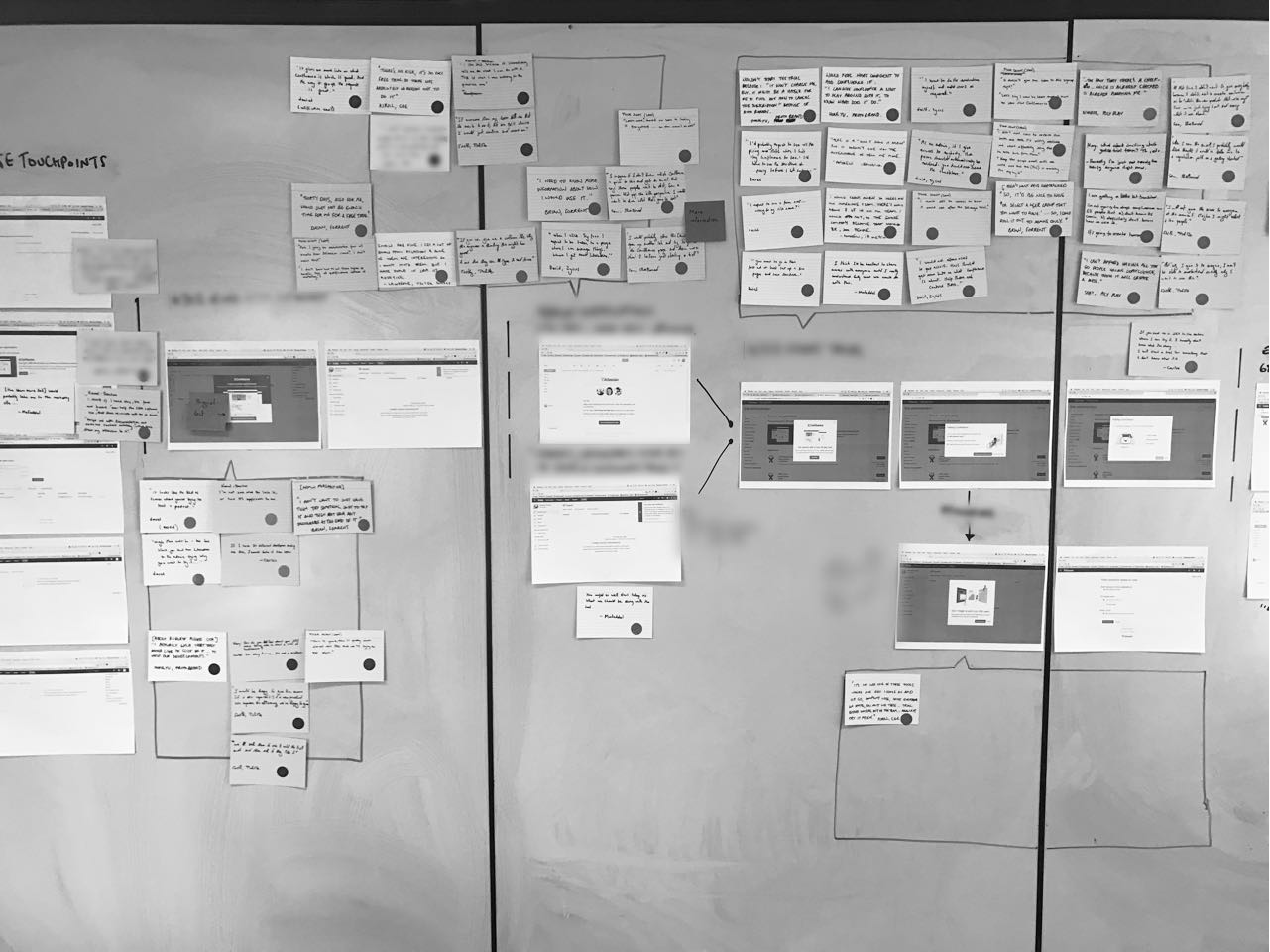

To do so, we started with work sessions to lay out the user’s journey from start to finish. Once the walls full of screens, the team would start to incorporate “questions” and “research” stickies. The point being to figure out what we know and what we would need to research.

Our team identifying the funnels, its pain points and missing data during a workshop.

After the workshops, the team gathered the missing data and I translated those information into digital posters explaining our users stories. This overview of the user journey helped to figure out the principal leaks in the funnel and biggest optimisation opportunities.

A map of the funnels including analytics, NPS comments and user research insights.

Multiple growth specialists advocate in favour of a "quantity" framework. They embrace the random factor and advise to test as many ideas as possible until a positive result comes out.

However, it requires a high traffic and often results in very long tests. Also, when working in products, random experiments will inevitably create holes in the system, making this method an expensive one.

With Atlassian’s value “Don’t #@!% the customer” in mind, we leveraged our experience to apply design thinking methodologies to product growth.

We treated experiments like diamonds and worked a longer envisioning step that enabled more meaningful tests.

With customers at the center of our work, we analysed the way they performed their actions to hypothesise around their motivations. This approach helped us maximise the learnings that came out of every experiment.

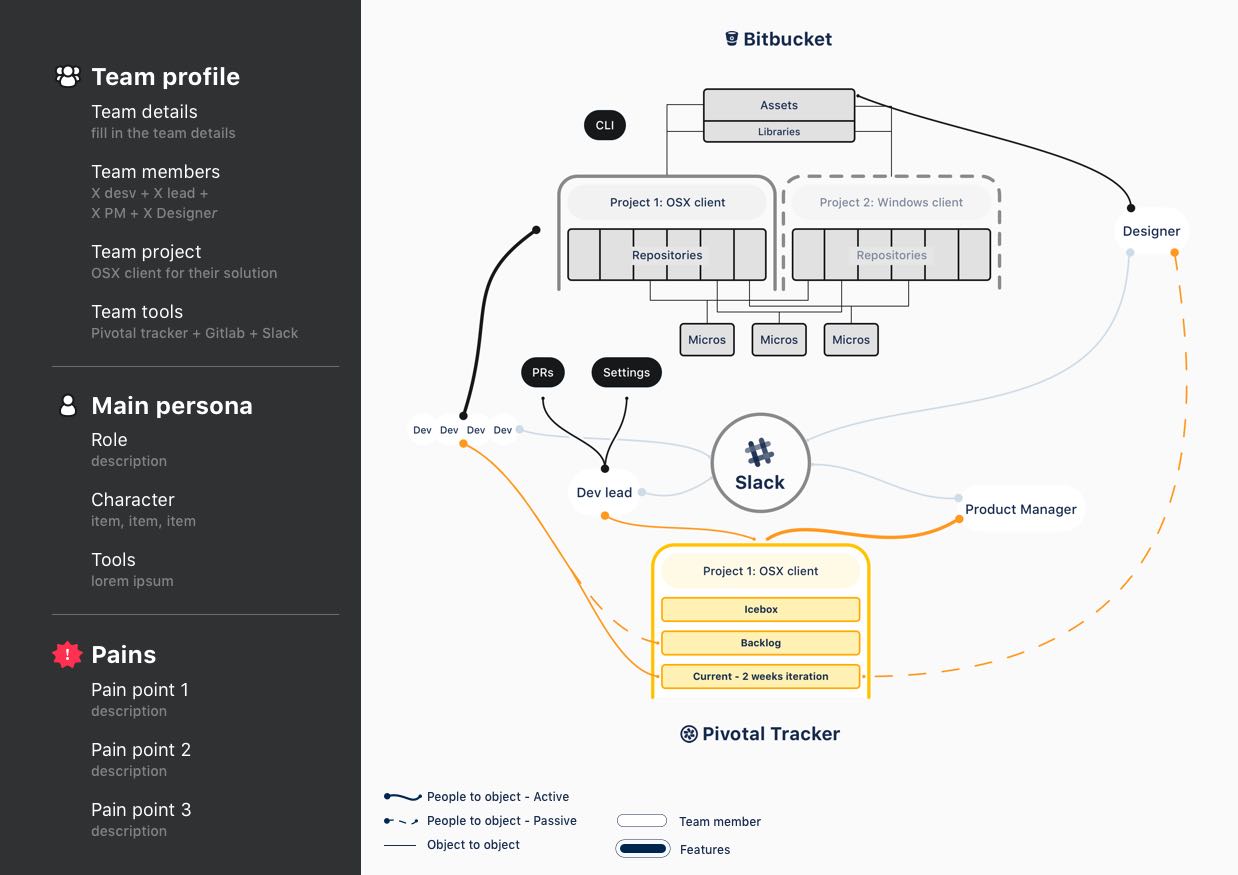

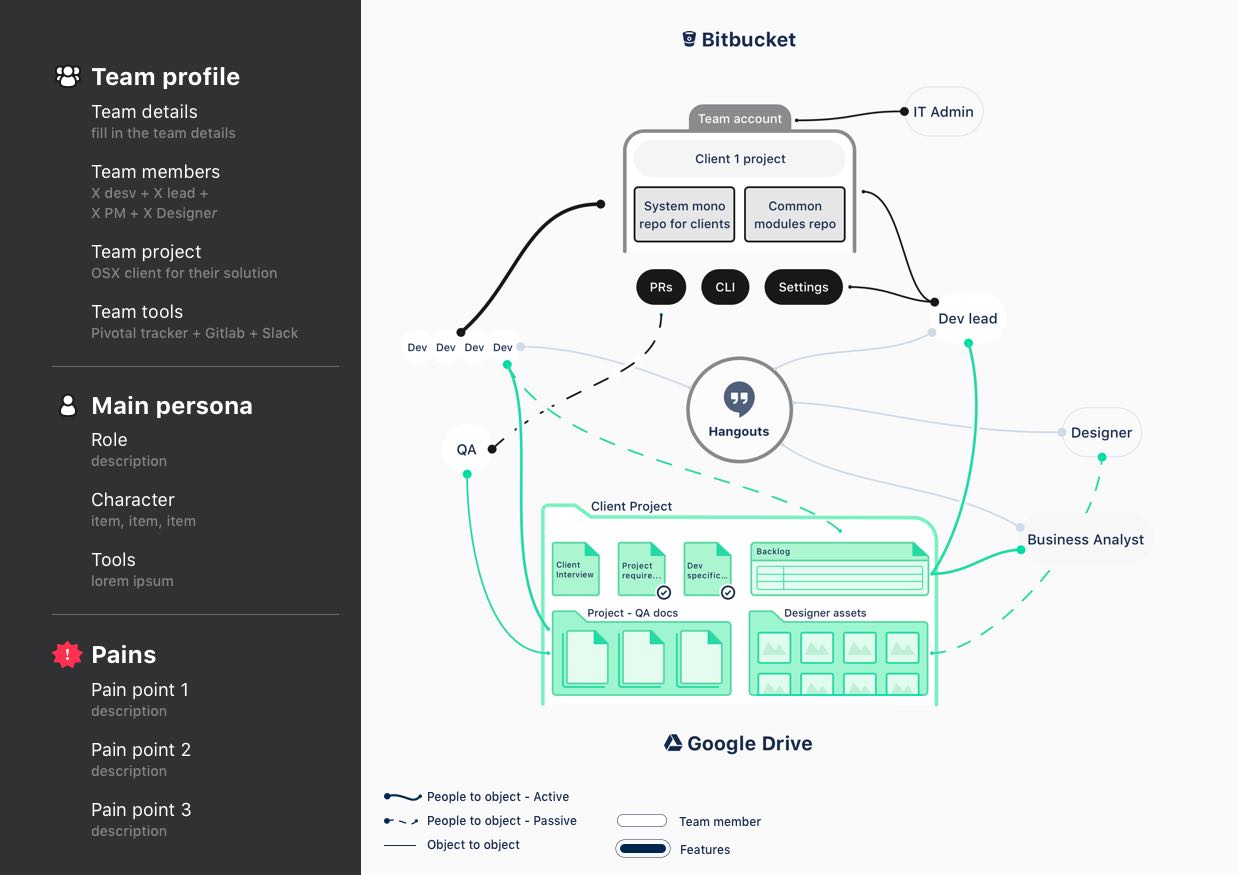

Creating team profiles

Atlassian’s softwares aim to ease and improve teamwork in all kind of organisation. The role of growth in the company is to bring those softwares to every team, but this requires to understand what a team is and how they operates.

Thanks to specific team models created by our researchers, my manager and I built multiples “team profiles" – a set of persona forming a team – to learn our users’ workflow.

We mapped the process of different teams, connecting team members to tools.

This visualisation brought a better view to how teams function, which tools they use, their pain points and the benefit of an Atlassian software.

Additionally, those maps helped rationalise Atlassian’s millions of users under different categories and empathise with them.

Maps representing the links between the members and tools of a team profile.

One of the fundamental aspects of a growth team is its ability to generate innovative ideas. Re-using the work we would create during the first phase, we designed specific workshop frameworks to generate solutions that would optimise the user journeys, entirely disrupt them or open new channels.

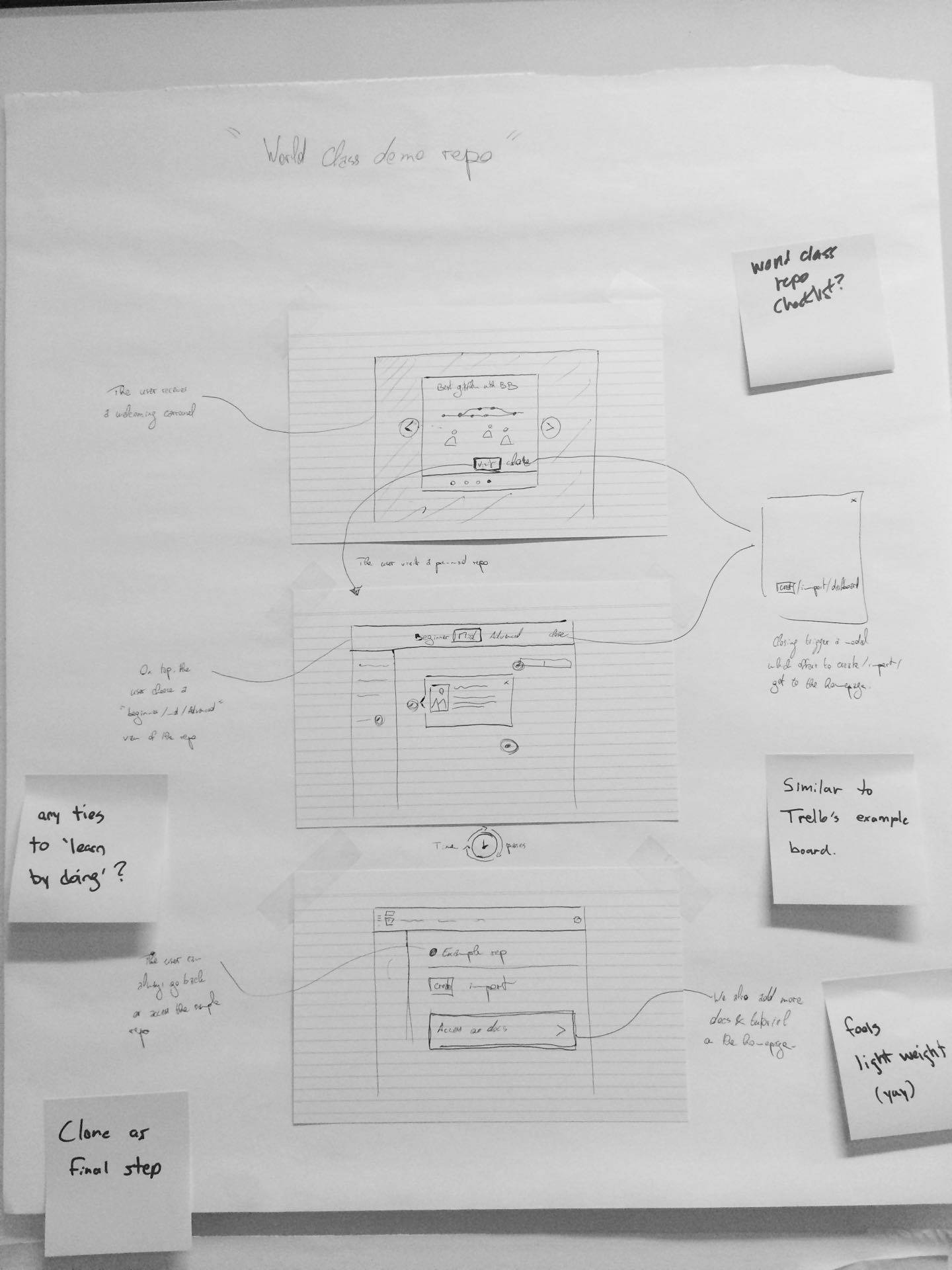

In early 2017, I participated in an initiative to improve Bitbucket onboarding. For 2 weeks, I partnered with the data analyst and product manager to perform NPS analysis, retrace Bitbucket on-boarding history and interview customers in order to organise a two days brainstorming.

Design principles

We kicked off the session with a global presentation, involving experts, feeding the participants with every bits of data. and then started a user journey exercise.

The team outlined what it envisionned being the biggest frustrations in a first-time usage of the software.

With this play, not only I wanted to have the team build empathy with Bitbucket’s users, but also figure out the major design issues to resolve. With an affinity analysis, we defined the biggest area of improvements, that we turned into design principles for the on-boarding we were aiming to create.

#1

Speak my language

Commit, clone, repository, Pull requests, .gitignore.

Our design needs to consider that some user are totally new to DVCS while other are experts.

#2

Don't overwhelm

Today, the user is offered many controls, many paths and features.

Our design needs to consider how to get started to avoid confusing navigation.

#3

Provide context

Why clone a repository? Why enter a commit message?

Our design needs to provide explanation of the steps the user has to accomplish.

Brainstorming

The second day was dedicated to solutions. I first introduced the team to a set of persona I created from our user research.

Then, inspired by the

Google design sprint, the participants spend time creating concepts, diverging and converging to build "experiments posters" focused on optimising “Bitbucket first experience for a specific user type”.

Finally, we prioritise those ideas and turn them into experiments to start the development phase.

Photos taken during an ideation workshop that I was facilitating. Top: team generating ideas – Bottom: Idea posters.

The envisioning phase played a big role to generate experiment ideas that focus on solving the user’s pain points and not mindlessly attempt to drive growth. Nonetheless, to capture the essence of the proposed solutions and reaffirmed their potential, it is important to phrase the experiments as hypothesis.

Good hypotheses ensure a better design quality by framing the user experience around the success metrics while grounding the decisions in facts and data.

Hypothesis framework

Proposed solution

We believe that a sandbox repository

Success metrics

Will increase active users & the learning curve

Evidences & data

Because X% are looking for help

With a good hypothesis in hand, the design usually starts with user flows. Not only they help estimate the full effort of the experiment but also, once delivered, they allow to keep a development and design stream of work at the same time.

On top of that, they help to instrument the analytics event and understand the post analysis better.

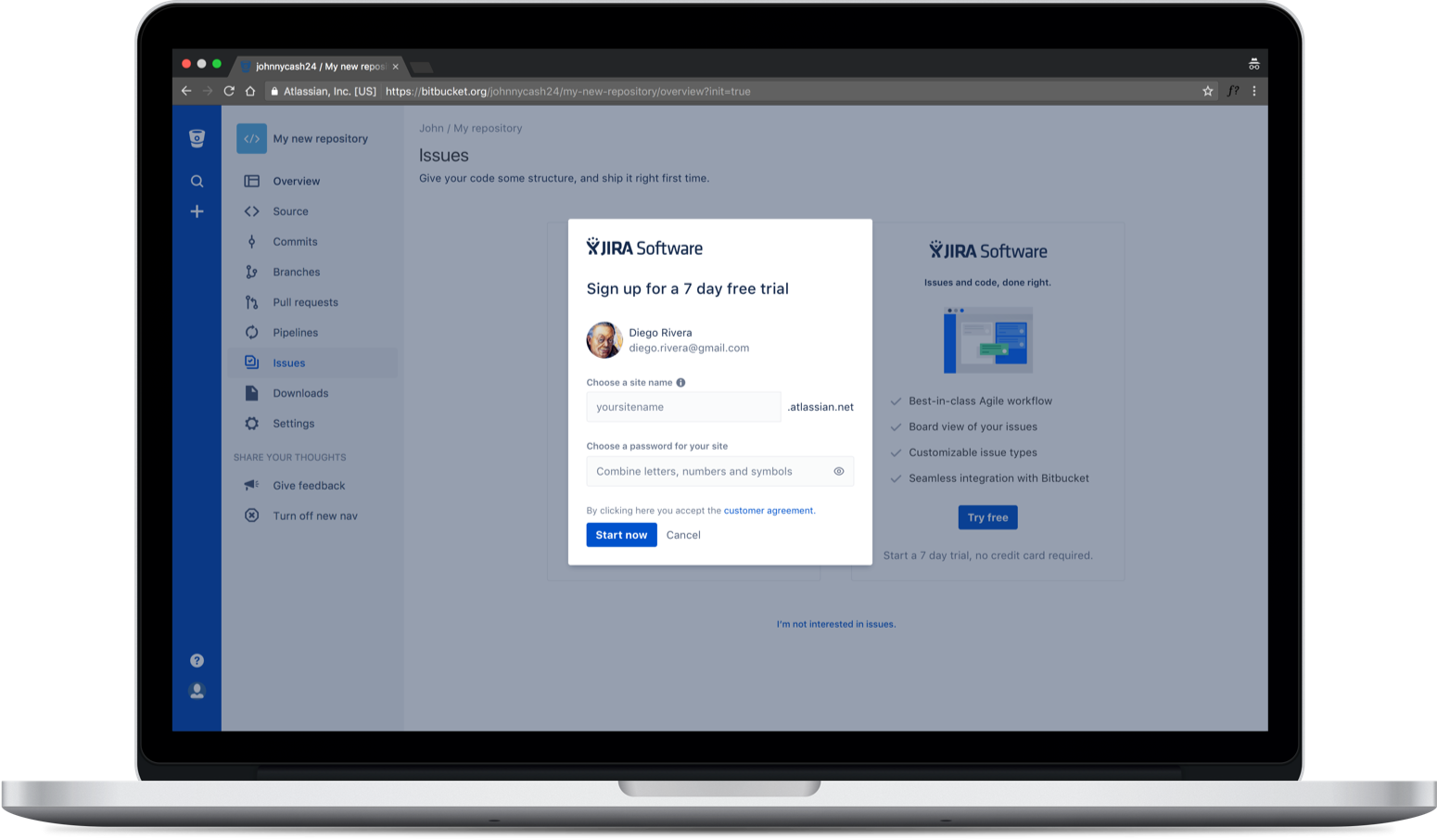

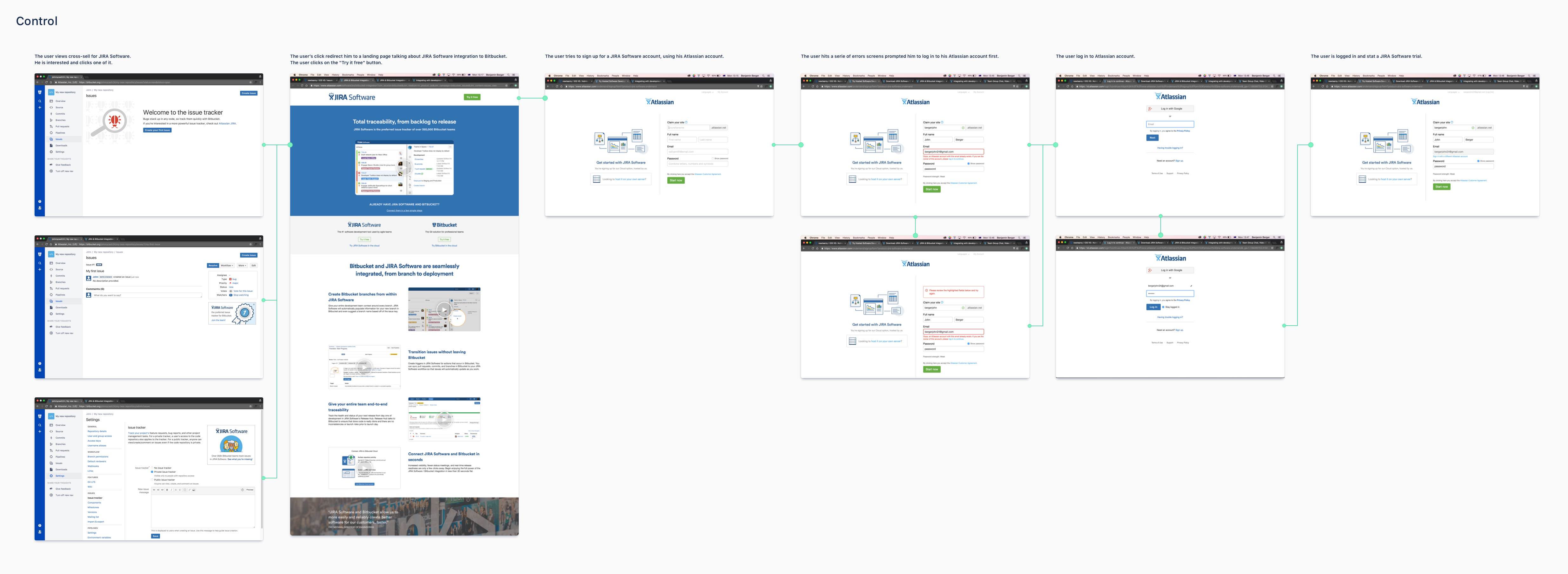

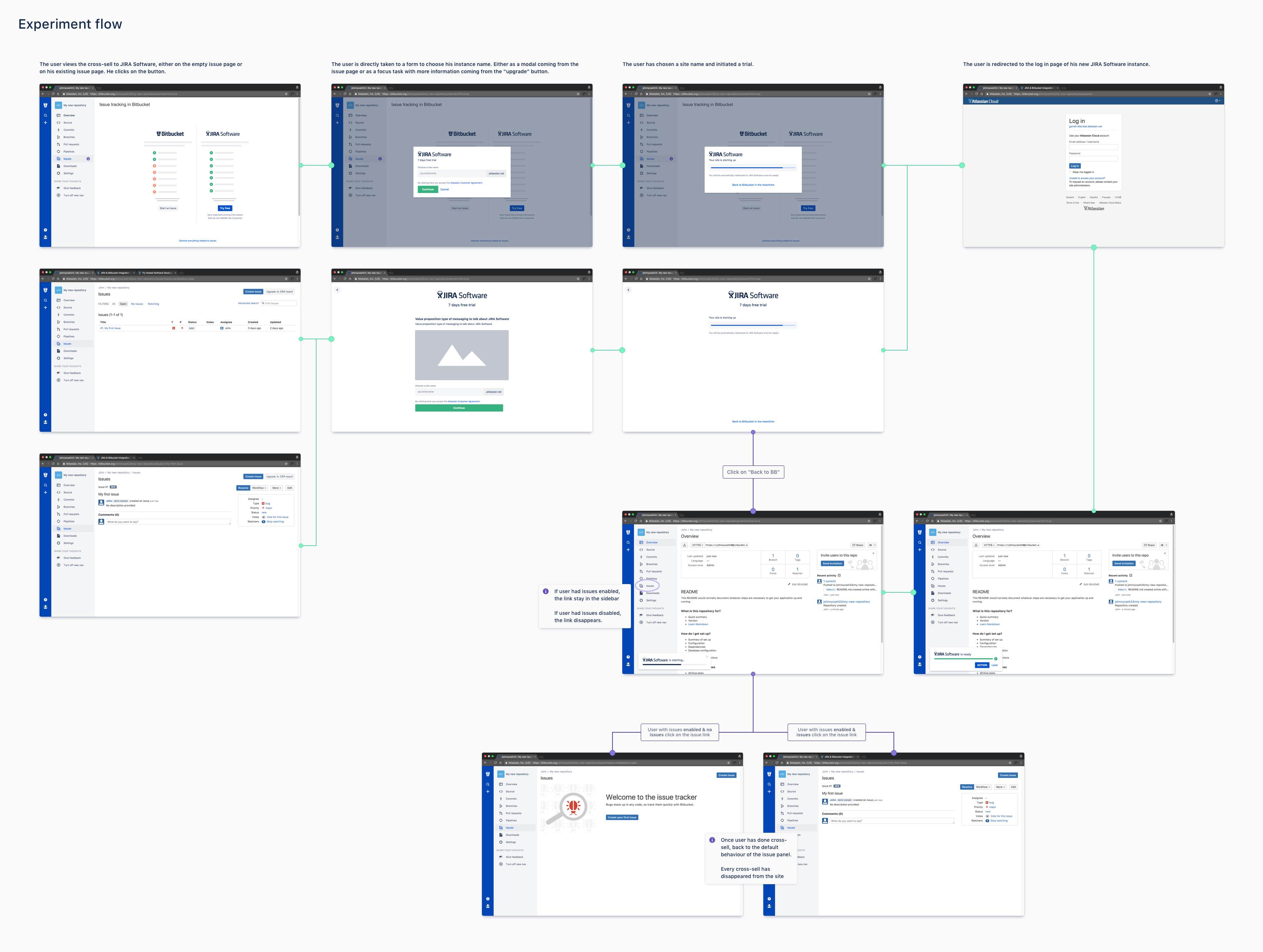

In this experiment, we aimed to simplify the signup flow from a product to another.

The control flow a user experience when signing up to JIRA Software from Bitbucket.

The experiment flow I designed to increase the signup rate.

In growth, the team needs to work at a high rhythm of execution and release, which means that execution is full of compromise, hence the importance of a good hypothesis to not lose sight of the core principles that are being tested.

To increase the overall confidence of our design decisions, our team worked with online usability tests.

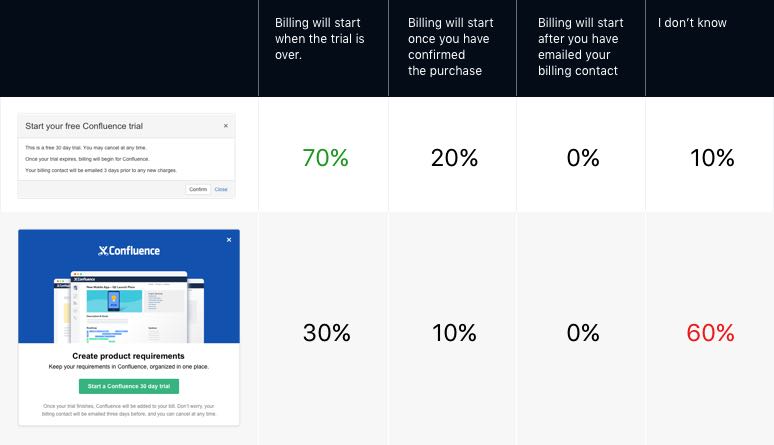

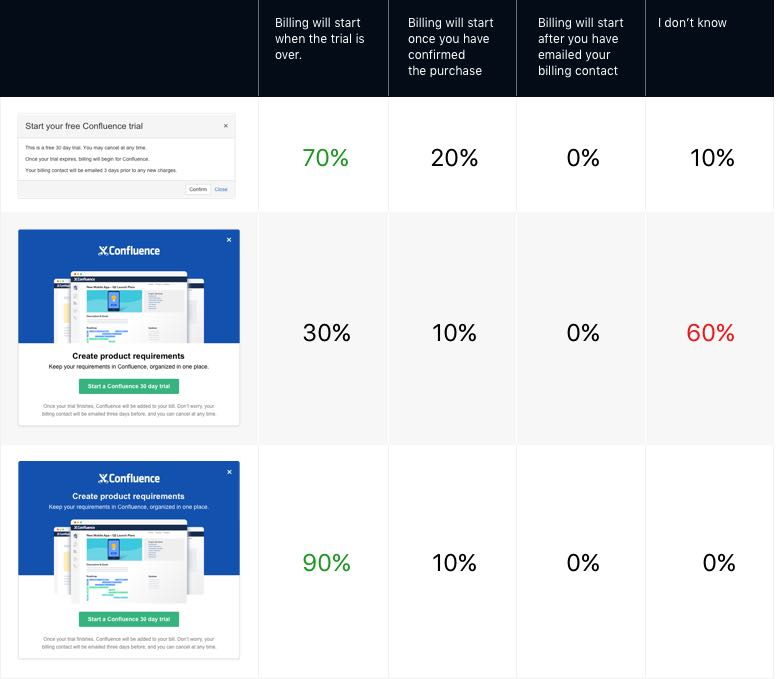

For example, in JIRA, we needed to improve the visual and messaging of a modal dialog.

We knew that many of the information presented there were detrimental factor and important legal copy.

Me and other designers set up a comprehension test with a set of 4 radio questions comparing the memory of our users.

Did they remember and understood what was presented on those modal dialogs?

The results of a usability study comparing two modal dialog designs.

The results shows that our new modal caused confusion. Possibly the big block of text at the bottom was too tough to read.

We then decided to update the design, this time, with a better separation between each type of content. The new design outperformed every other in a new test.

With more time, this mistake would have been avoided. But thanks to those quick tests, the team came to an agreement in less than a day, avoiding endless feedback sessions.

The results of a Usability study comparing a revised modal dialog design, improved thanks to the result of the previous test.

The experiments we launched were often close to MVPs. We emphasised on the viability nature, but many decisions of the experimental design were delayed until the team landed in a successful dimension.

At Atlassian, we believed that experiments were an indicator, to orientate the overall product in the right direction. Successful experiments managed to increase the overall confidence we had in a concept, a more tangible proof – but not the final product.

The company was taking proud in saying that we were data-informed, not data-driven.

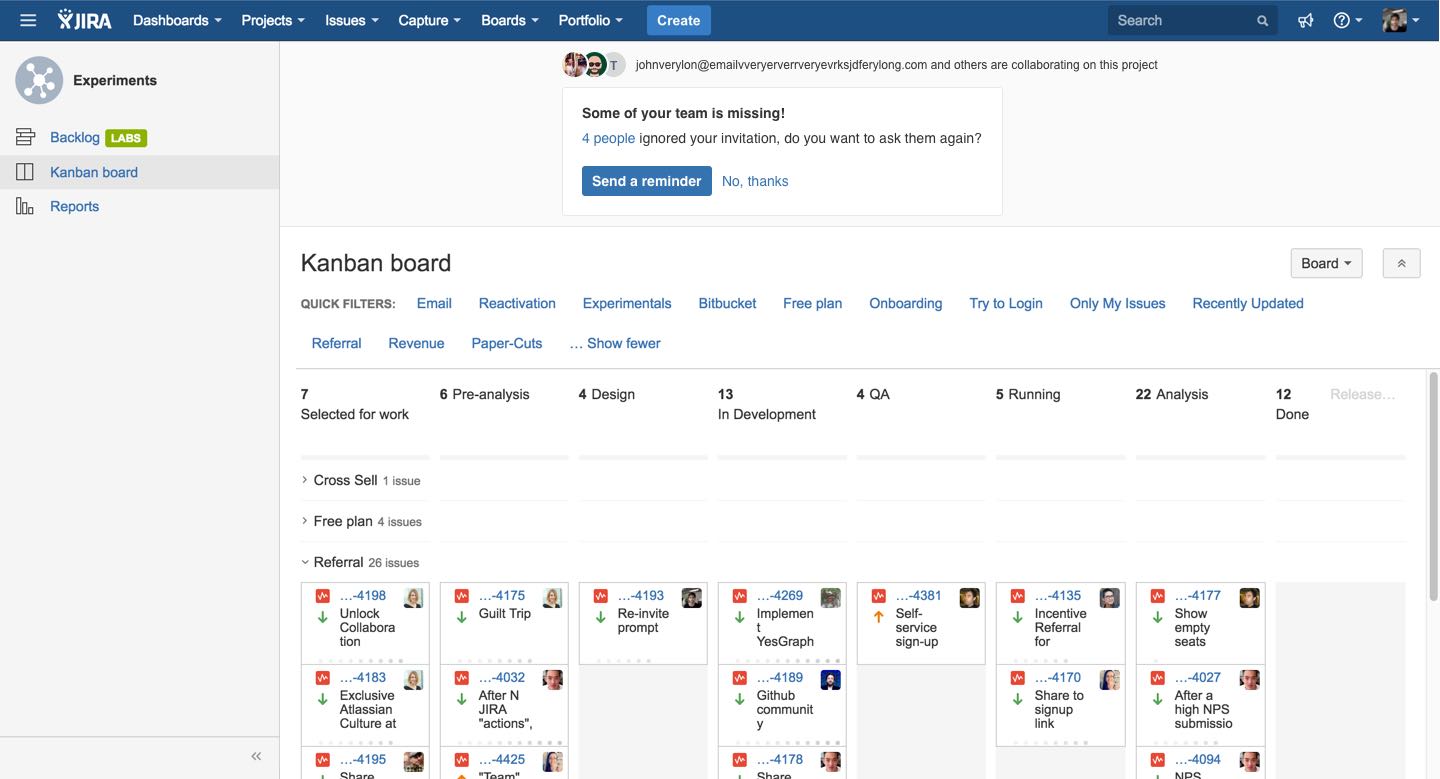

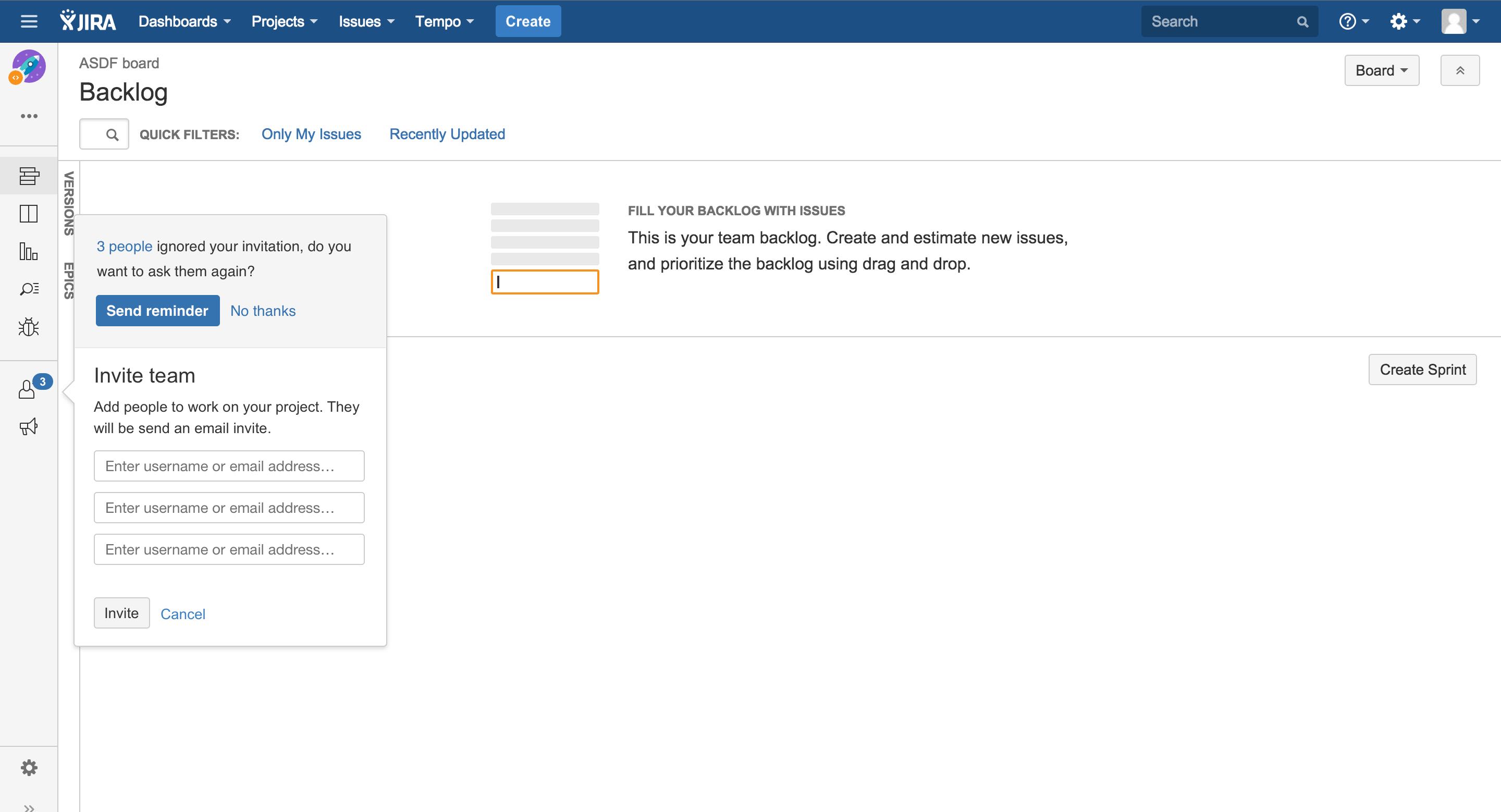

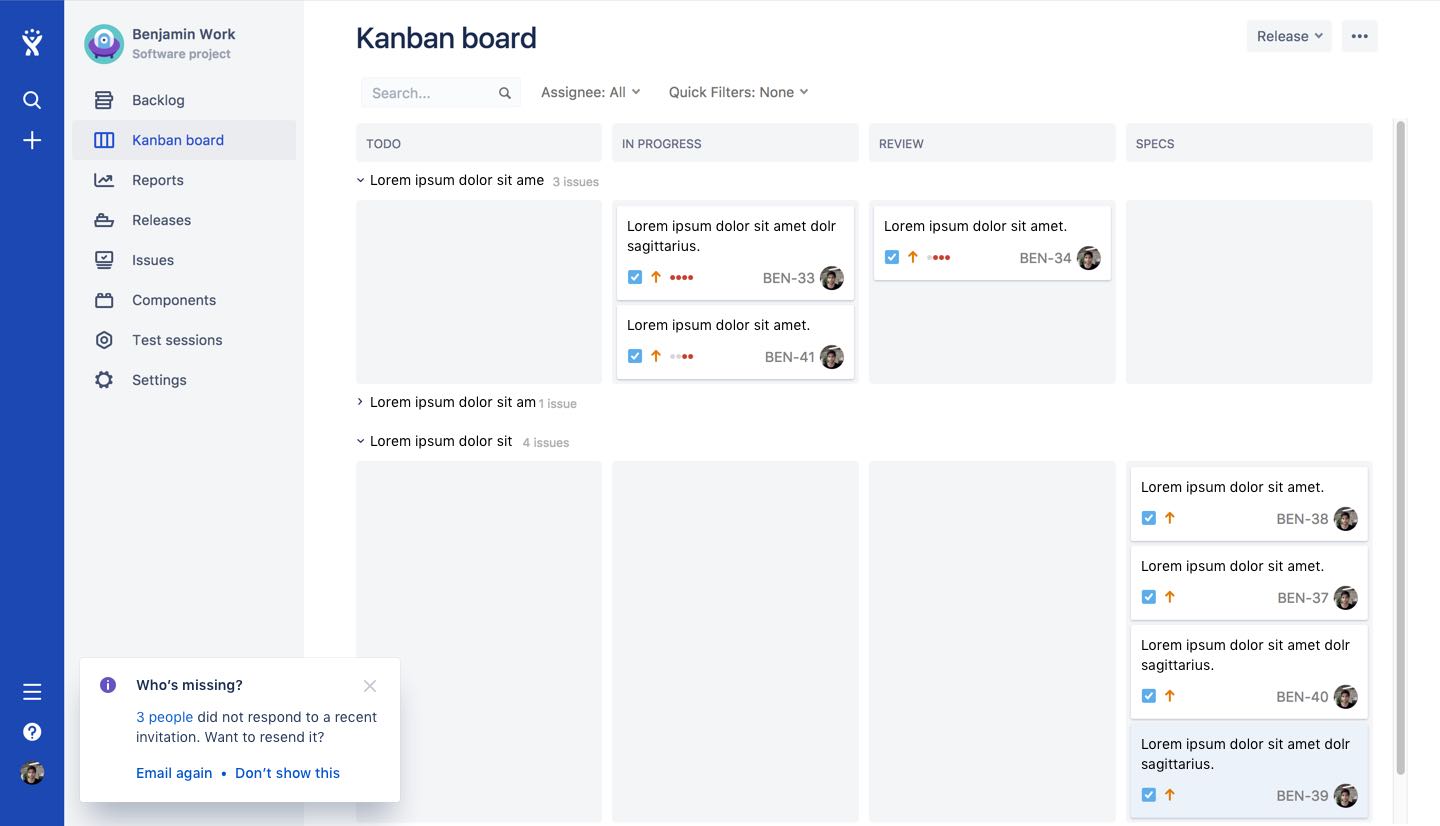

In late 2016, I worked on an experiment aiming to remind site administrators that some invited users never logged in to their site and offered to re-invite them.

I tested multiple placements and messaging of this concept.

Experiment variations aimed to increase the amount of active users on JIRA Software.

Each variation came out as a success. A reminder was in fact useful to increase our primary metric. However, it was impossible for us to launch any of those design in production at that time because of the transformation of Atlassian design language.

Given that both – very different – design was a success, we interpreted the concept to be strong enough, no matter the design execution and I proceeded to transform the experiment into a more "product compliant" version.

The final design, revised thanks to the experiments results, launched on JIRA Software in Sept 2017.

The more complicated the experiment user flows, the harder it will be to turn the experiment into a production version.

To improve our process, we relied heavily on user research, complementing the quantitative data with very targeted qualitative insights.

Additionally, my manager introduced a play he called the ‘DNA’ workshop where each member of the team would brainstorm, screen by screen, what they believe made up for the success of the experiment.

During this session, we had the opportunity to reflect on our first intention, getting back to the essence of the concept. This sessions helped us create the next requirements for the production version based on what we learned from the AB test.

Result of a workshop where the team brainstormed: "what is the DNA of this successful experiment".

Working in Atlassian’s product growth team has been a huge contributor to my product design education. During two years, I have learned new processes and refined my overall comprehension of product design for the digital medium.

Moreover, I believe that the use of hypothesis, experimentations, success metrics and fast idea generation are not exclusive of growth design but important principles and techniques that can be applied in any product design methods to create great solutions.

Using machine learning to rethink daily news consumption – from problem definition to complete product.

Read case study